taking the intermediate point in between two points in an embedding space produces a point that represents the “intermediate meaning” between the corresponding tokens. The embedding spaces they learn are semantically interpolative, i.e. The word2vec space also verified this property. moving a bit in an embedding spaces only changes the human-facing meaning of the corresponding tokens by a bit. The embedding spaces they learn are semantically continuous, i.e. Self-attention confers Transformers with two crucial properties: How self-attention works: here attention scores are computed between “station” and every other word in the sequence, and they are then used to weight a sum of word vectors that becomes the new “station” vector. Transformers work by learning a series of incrementally refined embedding spaces, each based on recombining elements from the previous one. It will tend to pull together the vectors of already-close tokens - resulting over time in a space where token correlation relationships turn into embedding proximity relationships (in terms of cosine distance). It’s a mechanism for learning a new token embedding space by linearly recombining token embeddings from some prior space, in weighted combinations which give greater importance to tokens that are already “closer” to each other (i.e., that have a higher dot-product). Self-attention is the single most important component in the Transformer architecture. In practice, LLMs do seem to encode correlated tokens in close locations, so there must be a connection.

How is that related to word2vec’s objective of maximizing the dot product between co-occurring tokens? You may ask - wait, I was told that LLMs were autoregressive models, trained to predict the next word conditioned on the previous word sequence. Even the dimensionality of the embedding space is similar: on the order of 10e3 or 10e4.

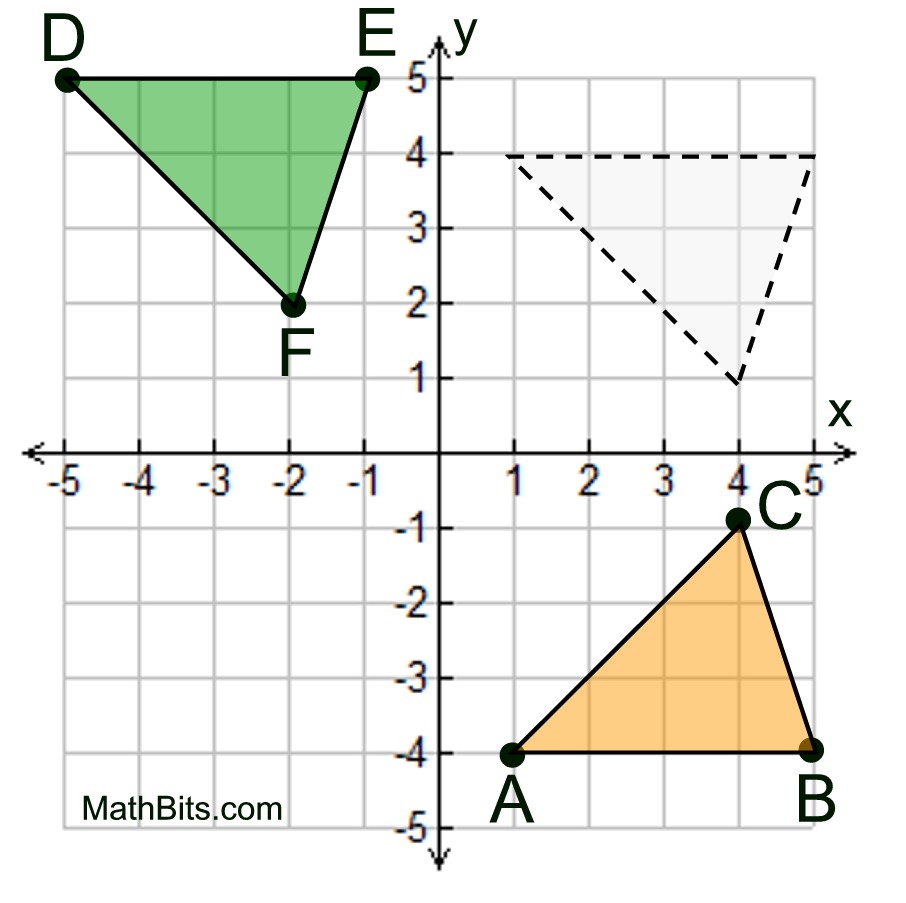

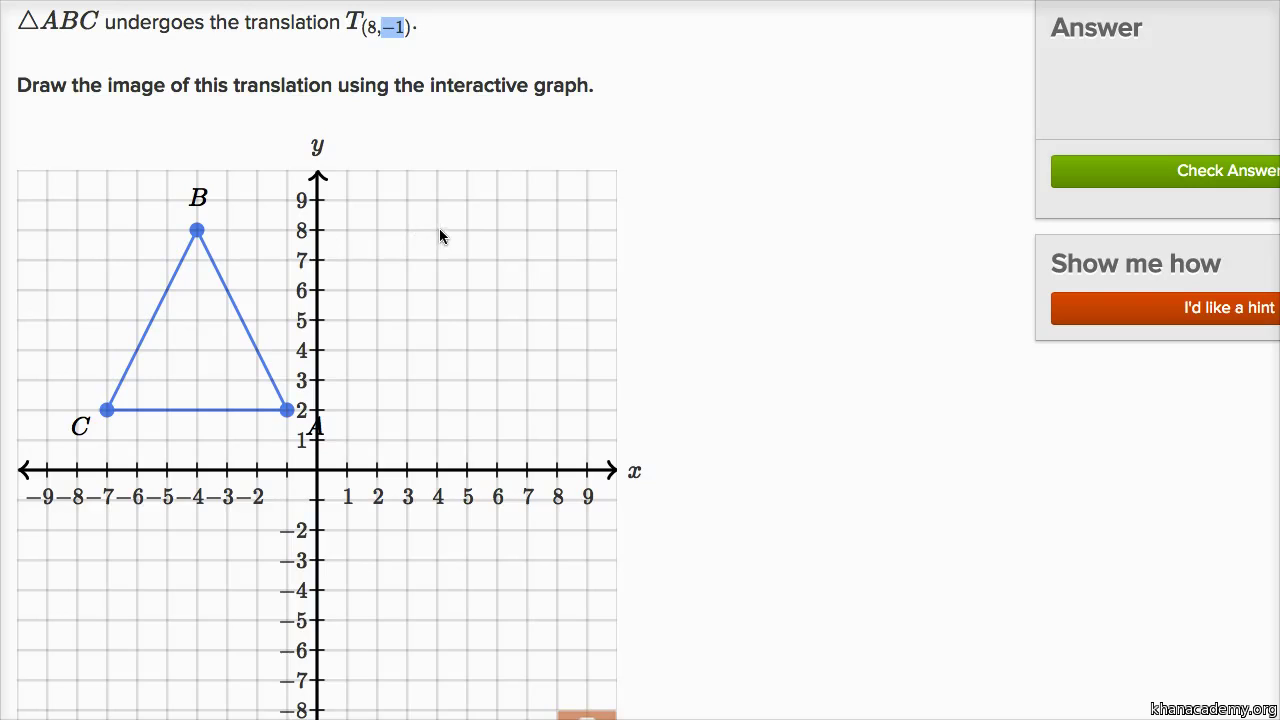

The distance function used to compare tokens is the same in both cases: cosine distance. Both rely on the same fundamental principle to learn this space: tokens that appear together end up close together in the embedding space. And yet, they actually have a lot in common with good old word2vec.īoth are about embedding tokens (words or sub-words) in a vector space. They generate perfectly fluent language - a feat word2vec was entirely incapable of - and seem knowledgeable about any topic. On the surface, modern LLMs couldn’t seem any further from the primitive word2vec model. Pretty cool! There seemed to be dozens of such magic vectors - a plural vector, a vector to go from wild animals names to their closest pet equivalent, etc.įast forward ten years - we are now in the age of LLMs. As in: V(king) - V(man) + V(woman) = V(queen). There existed a vector in the space that could be added to any male noun to obtain a point that would land close to its female equivalent. It featured some form of emergent learning - it was capable of performing “word arithmetic”, something that it had not been trained to do. They found that the resulting embedding space did much more than capture semantic similarity. Their model used an optimization objective designed to turn correlation relationships between words into distance relationships in the embedding space: a vector was associated to each word in a vocabulary, and the vectors were optimized so that the dot-product (cosine proximity) between vectors representing frequently co-occurring words would be closer to 1, while the dot-product between vectors representing rarely co-occurring would be closer to 0. They were building a model to embed words in a vector space - a problem that already had a long academic history at the time, starting in the 1980s. The coordinates of \(ABCD\) are \(A\)(negative 4 comma 1), \(B\)(negative 1 comma 0), \(C\)(1 comma 3)and \(D\)(negative 2 comma 3). Vertical axis scale negative 1 to 4 by 1’s. Horizontal axis scale negative 5 to 7 by 1’s. \( \newcommand\): Quadrilateral \(ABCD\) and its image quadrilateral \(A'B'C'\) and \(D'\) on a coordinate plane, origin \(O\).

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed